Reinvent a retailer’s data & analytics interviewing process (preface)

in Blog

- Preface: Reinvent a retailer’s data & analytics interviewing process (this post)

- Data science interviews: What we screen for? What we will not?

- Data science interviews: Grading rubrics (coming soon)

Ambitions to refine the process

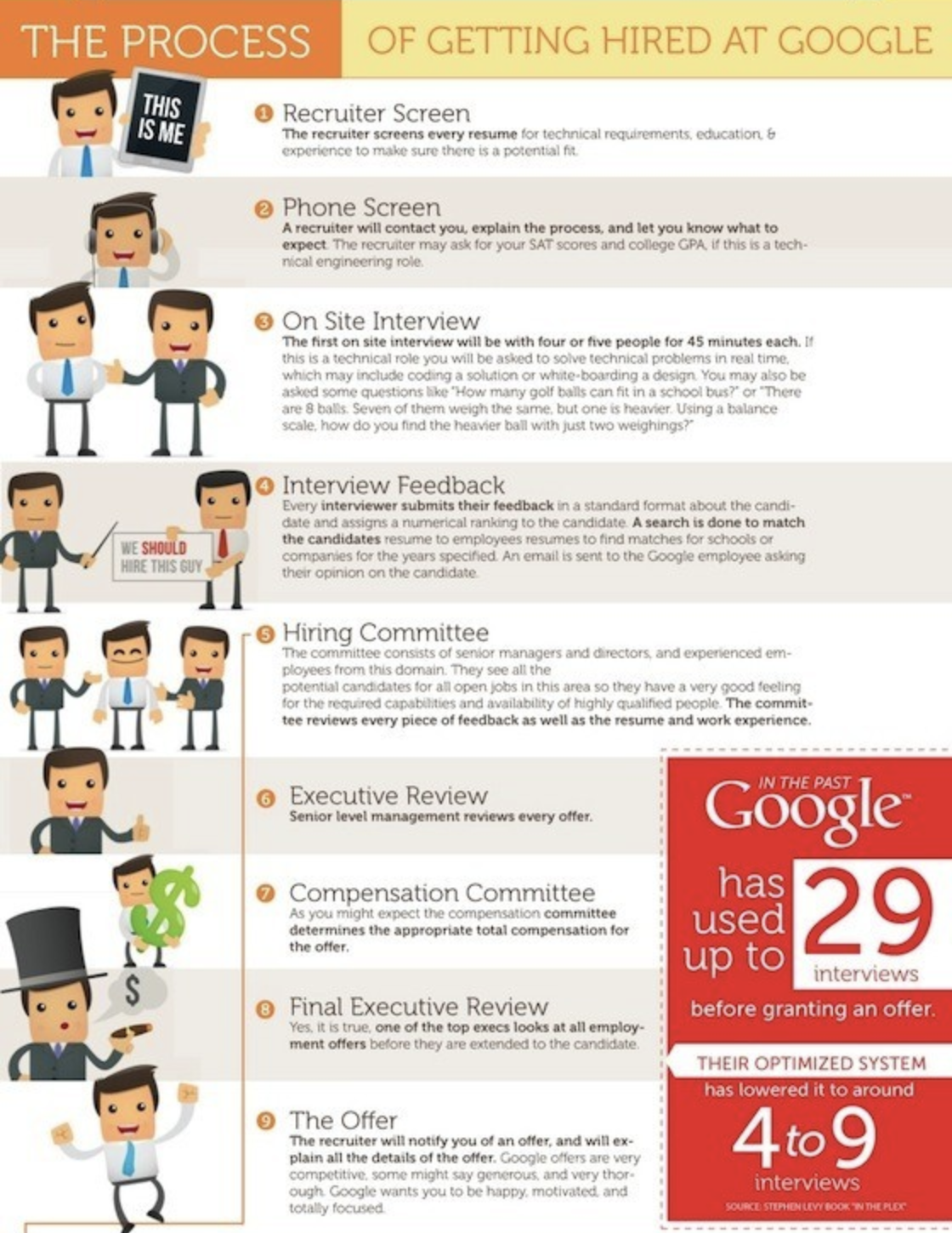

Hiring is one of the most important things that you must do right in a company. It’s not out of the blue that companies like Facebook and Google go through great lengths to make sure that they hire the right person for the job. You can read more about Google hiring experiences here.

I have built data & analytics teams from scratch before, but our hiring process needs a lot of improvements.

The process of getting hired at Google http://dialoguereview.com/take-get-a-job-at-google/

The process of getting hired at Google http://dialoguereview.com/take-get-a-job-at-google/

No clear hiring rubric

We had a rough idea of how an ideal candidate looks like in our head, but we never develop a rubric to score candidates on. As a result, our hiring decisions were made solely on our impressions of the candidate. Without a rubric, you tend to ask random questions that pop up to your mind, write sketchy debriefs and depend on luck that the candidate will tick all your boxes.

What are we looking for?

We never really spend too much time writing job descriptions. What we did was Google the position and put together a JD that we think will match the job. We also never clearly define what we mean when we say we look for people with Python/R coding experience, or critical thinking, or problem-solving capabilities.

Bad hiring process

We often start our hiring process by having an HR personnel phone screen and send out a technical test. We will evaluate the test (again with no rubric) and get back on whether or not we want an in-person interview with the candidate. The candidate will have 30 minutes to present his findings and then the interviewer(s) (often time the hiring manager) will start the interview process. Afterward, HR personnel will conduct an additional cultural fit interview.

There are several things wrong with this approach:

- Hiring manager tends to make biased hiring decisions

- No rubric for the test means the candidate with better presentation skills will have an edge

- The test should be designed to look for desirable skills in our candidate

- Team members won’t have a say in hiring who they will be working with

- No bar-raiser (hiring managers are desperate sometimes. Without a bar raiser, they tend to employ subpar people that in turn will hire more subpar people.

What we don’t look for?

We provide no guidance to interviewers on the things that are unimportant. The tech industry has a habit of screening candidates on criteria that are uncorrelated (and in some cases negatively correlated) with job performance. By failing to be explicit about the things that we consider unimportant, we risk letting people make decisions based on them. (Engineering interview — refining our interview process)

Objectives

In the next part of this series, I will address the above issues by refining our hiring process. We consider this project a success if it helps us:

- Hire the right people

- Be consistent and objective in our hirings

- Ask the right questions to get to know our candidates

- Communicate our process and objectives to interviewers and interviewee

Next steps

I will continue developing this series in the following weeks and will update any future links to this write-up. I’m committed to further promote this series in two more posts:

Part 1: What we screen for? What we will not?

I will outline what we screen for in our data positions, including data analyst, data engineer, and data scientist. I will also spell out criteria that we won’t use to judge our candidates.

Part 2: Grading rubric

I will describe each desired category using 5 level scales: Strong No, No, Mixed, Yes, or Strong Yes. I will also create a template so that our interviewer can use it in their interviews.

We are hiring

Thank you for reading, we are looking for talented data analysts, data engineers, and data scientists to join our team. Please reach out to me at v.tuanna83@vincommerce.com for more info.